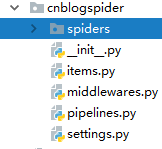

1.创建爬虫项目,在指定文件夹的命令窗口下运行

scrapy startproject cnblogspider

项目结构如下

2.添加item

代码如下

import scrapy

class CnblogspiderItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() url=scrapy.Field() cimage_urls=scrapy.Field() cimage=scrapy.Field() image_paths=scrapy.Field()

3.创建spider,在创建的项目文件夹命令窗口下执行

scrapy genspider cnblog cnblogs.com

产生爬虫

然后重写start_urls和parse方法

其中Request为scrapy自带的一个类,项目中用到直接实例化

全部代码如下

import scrapy from cnblogspider.items import CnblogspiderItem from scrapy import Request,Selector

class CnblogSpider(scrapy.Spider): name = 'cnblog' allowed_domains = ['cnblogs.com'] # start_urls = ['http://cnblogs.com/qiyeboy/default.html?page=1'] start_urls = ['http://cnblogs.com/qiyeboy/default.html?page=1']

def parse(self, response): # 实现网页的解析 # 首先抽取所有的文章 papers=response.xpath(".//*[@class='day']") # 从每篇文章中抽取数据 for paper in papers: url = paper.xpath(".//*[@class='postTitle']/a/@href").extract_first() # title = paper.xpath(".//*[@class='postTitle']/a/span/text()").extract()[0] # time = paper.xpath(".//*[@class='dayTitle']/a/text()").extract()[0] # content = paper.xpath(".//*[@class='postTitle']/a/span/text()").extract()[0] # print('%s,%s,%s,%s'%url%title%time%content) # print(f'{url}{title}{time}{content}') # item=CnblogspiderItem(url=url,title=title,time=time,content=content) item = CnblogspiderItem(url=url) request=scrapy.Request(url=url,callback=self.parse_body) # 将item暂存 request.meta['item']=item yield request next_page=Selector(response).re(u'<a href="(\s*)">下一页</a>') if next_page: yield scrapy.Request(url=next_page[0],callback=self.parse) def parse_body(self,response): item=response.meta['item'] body=response.xpath(".//*[@class='postBody']") # 提取图片链接 item['cimage_urls']=body.xpath('.//img//@src').extract() yield item

4.构建item pipeline存储下载的图片

因为是存储图片所以要用到MediaPipline中的ImagesPipeline

以及设置settings

4.1 要存储到指定的路径下所以重写get_media_requests(item,info)方法和item_completed(results,items,info)方法

其中get_media_requests方法中要用到item的cimage_urls字段

item_completed方法中要用到item的image_paths字段

具体代码如下:

from itemadapter import ItemAdapter from scrapy.exceptions import DropItem import scrapy from scrapy.pipelines.images import ImagesPipeline

class MyImagesPiplines(ImagesPipeline): def get_media_requests(self, item, info): for image_url in item['cimage_urls']: yield scrapy.Request(image_url) def item_completed(self, results, item, info): image_paths=[x['path'] for ok,x in results if ok] if not image_paths: raise DropItem('item contains no image') item['image_paths']=image_paths return item

4.2设置settings,

4.2.1 因为不能用scrapy初始的user_agent,所以要激活USER_AGENT,并添加自己浏览器的user_agent

代码如下:

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.61 Safari/537.36'

4.2.2 激活Pipeline

代码如下

```python ITEM_PIPELINES = { # 'cnblogspider.pipelines.CnblogspiderPipeline': 300, 'cnblogspider.pipelines.MyImagesPiplines': 301, }

4.2.3

其他项目为固定项目,根据实际需要设置即可

其中

IMAGES_STORE为文件存储路径字段

IMAGES_URL_FIELD问文件url所在item字段

IMAGES_RESULT_FIELD为文件结果信息所在item所在字段

IMAGES_EXPIRES为文件过期时间(天)

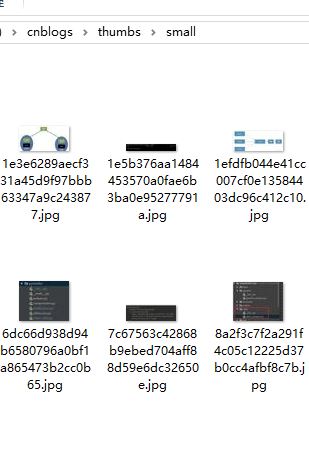

IMAGES_THUMBS制作图片缩略图,并设置缩略图大小

具体代码如下

IMAGES_STORE='D:\\cnblogs' IMAGES_URL_FIELD='cimage_urls' IMAGES_RESULT_FIELD='cimages' IMAGES_EXPIRES=30 IMAGES_THUMBS={ 'small':(50,50), 'big':(270,270), }

5.运行爬虫

在项目所在文件夹命令窗口下运行命令

scrapy crawl cnblog

–

神龙|纯净稳定代理IP免费测试>>>>>>>>天启|企业级代理IP免费测试>>>>>>>>IPIPGO|全球住宅代理IP免费测试